Papers

This page lists my published and forthcoming papers. For work in progress, see my Work in Progress page. For books, see Books. For citations to my publications, see my Google Scholar page.

-

John MacFarlane, “Pinning Down Plato’s Protagoras”, in Truth and Relativism in Ancient Philosophy, ed. Tamer Nawar and Matthew Duncombe (Cambridge: Cambridge University Press, forthcoming). [preprint]

Protagoras is often mentioned in discussions of relativism about truth, on the strength of the position Plato attributes to him in the Theaetetus: “as each thing appears to me, so it is for me, and as it appears to you, so it is for you” (152a). Many consider him the first truth relativist, and Plato’s famous self-refutation argument against him is mentioned in virtually every general discussion of truth relativism. I distinguish a number of possible positions about the content of appearances which Plato might be attributing to Protagoras, only some of which are forms of relativism about truth. I argue that the textual evidence is most consistent with two of these positions, which I call Relational Properties and Moderate Relativism. However, it is not clear whether Plato had the means to distinguish between these positions. I go on to suggest that, far from rejecting Protagoras’s view of the contents of appearances, Plato shares it. Either Protagoras is not a relativist about truth, or Plato is one too.

-

John MacFarlane, “Is Logic a Normative Discipline?”, in Oxford Handbook of Philosophy of Logic, ed. Filippo Ferrari and Elke Brendel and Massimiliano Carrara and Ole Hjortland and Gil Sagi and Gila Sher and Florian Steinberger (Oxford: Oxford University Press, forthcoming). [preprint]

Is logic a normative discipline? That depends on what one means by “normative discipline.” There is a weak sense in which logic is clearly normative—but so is every other science. In this chapter we consider whether logic might count as a normative discipline in some more distinctive and demanding sense. A number of authors have taken a “normative turn” and suggested that fundamental logical disagreements bottom out in normative ones. This thesis seems hard to maintain unless basic logical notions like validity can be analyzed in normative terms, but proponents of the normative turn have been reluctant to embrace this conclusion. We consider the reasons why, focusing on Hartry Field’s work.

-

John MacFarlane, “Why Future Contingents Are Not All False”, Analytic Philosophy 66 (2025). [preprint] [published version]

This is my contribution to a book symposium on Patrick Todd’s book The Open Future: Why Future Contingents Are All False (Oxford: Oxford University Press, 2021). Todd argues for a modified Peircean view on which all future contingents are false. According to Todd, this is the only view that makes sense if we fully embrace an open future, rejecting the idea of actual future history. I argue that supervaluational accounts, on which future contingents are neither true nor false, are fully consistent with the metaphysics of an open future. I suggest that it is Todd’s failure to distinguish semantic and postsemantic levels that leads him to suppose otherwise. I also show how one can resist Todd’s argument (with Brian Rabern) that the conceptual possibility of omniscience requires us to reject Retro-closure (φ → Wasn Willn φ).

-

John MacFarlane, “Belief: What is it Good for?”, Erkenntnis (2023). [preprint] [published version]

“Absolutely nothing,” say the radical Bayesians. “Simplifying decisions,” say the moderates. “Providing premises in practical reasoning,” say the epistemologists. “Coordinating with others,” say I. It is hard to see how to construct an adequate theory of rational behavior without using a graded notion of belief, such as credence. But once we have credence, what role is left for belief? After surveying some answers to this question, I will explore the idea that belief is in a different line of work altogether. Its job is not to rationalize and explain an agent’s behavior, but to track what the agent would accept as a reason. Although some philosophers have seen the connection between belief and reasons, they have tended to see reasons as part of a theory of rational action. This locates belief in the rationalizing and explaining business, where it must vie with credence. In contrast, I argue that reasons play no essential role in an account of individual rationality; they are important because we need to coordinate with others. Credence and belief thus answer to separate needs.

-

John MacFarlane, “Equal Validity and Disagreement: Comments on Baghramian and Coliva’s Relativism”, Analysis 82 (2022), 499–506. [preprint] [published version]

Baghramian and Coliva (2020) have correctly identified the crux of the problem facing truth relativism: reconciling the idea that two parties to a dispute genuinely disagree, with the idea that their standpoints are (in some sense) equally valid and their judgments equally correct. I explain how my view aims to reconcile Disagreement with (something worth calling) Equal Validity, and I discuss Baghramian and Coliva’s charge that I have secured, at best, a weak simulacrum of either.

-

John MacFarlane, “Lecture I: Vagueness and Communication”, Journal of Philosophy 117 (2020), 593–616. [preprint] [published version]

I can say that a building is tall and you can understand me, even if neither of us has any clear idea exactly how tall a building must be in order to count as tall. This mundane fact poses a problem for the view that successful communication consists in the hearer’s recognition of the proposition a speaker intends to assert. The problem cannot be solved by the epistemicist’s usual appeal to anti-individualism, because the extensions of vague words like ‘tall’ are contextually fluid and can be constrained significantly by speakers’ intentions. The problem can be seen as a special case of a more general problem concerning what King has called “felicitous underspecification.” Traditional theories of vagueness offer nothing that can help with this problem. Appeals to diagonalization do not help either. A more radical solution is needed.

-

John MacFarlane, “Lecture II: Seeing Through the Clouds”, Journal of Philosophy 117 (2020), 617–642. [preprint] [published version]

One approach to the problem is to keep the orthodox notion of a proposition but innovate in the theory of speech acts. A number of philosophers and linguists have suggested that, in cases of felicitous underspecification, a speaker asserts a “cloud” of propositions rather than just one. This picture raises a number of questions: what norms constrain a “cloudy assertion,” what counts as uptake, and how is the conversational common ground revised if it is accepted? I explore three different ways of answering these questions, due to Braun and Sider, Buchanan, and von Fintel and Gillies. I argue that none of them provide a good general response to the problem posed by felicitous underspecification. However, the problems they face point the way to a more satisfactory account, which innovates in the theory of content rather than the theory of speech acts.

-

John MacFarlane, “Lecture III: Indeterminacy as Indecision”, Journal of Philosophy 117 (2020), 643–667. [preprint] [published version]

This lecture presents my own solution to the problem posed in Lecture I. Instead of a new theory of speech acts, it offers a new theory of the contents expressed by vague assertions, along the lines of the plan expressivism Allan Gibbard has advocated for normative language. On this view, the mental states we express in uttering vague sentences have a dual direction of fit: they jointly constrain the doxastic possibilities we recognize and our practical plans about how to draw boundaries. With this story in hand, I reconsider some of the traditional topics connected with vagueness: bivalence, the sorites paradox, higher-order vagueness, and the nature of vague thought. I conclude by arguing that the expressivist account can explain, as its rivals cannot, what makes vague language useful.

-

John MacFarlane, “Review of Andrew Bacon, Vagueness and Thought”, Philosophical Review 129 (2020), 153–158. [preprint] [published version]

-

John MacFarlane, “On Probabilistic Knowledge”, Res Philosophica 97 (2020), 97–108. [preprint]

This is a lightly revised version of my comments from the Author Meets Critics symposium on Sarah Moss’s Probabilistic Knowledge at the Central Division APA, Denver, February 20, 2019.

-

John MacFarlane, “В каком смысле, если он вообще есть, логика нормативна по отношению к мышлению? [In What Sense (If Any) Is Logic Normative for Thought?]”, in Современная логика: Основания, предмет и перспективы развития [Modern Logic: Its Subject Matter, Foundations and Prospects], ed. Д.В. Зайцева [D. Zaitsev] (Москва [Moscow]: ИД Форум [Forum], 2018). [published version] (This is a Russian translation of my 2004 Central APA Talk, translated by Vasilyi Shangin, with help from Ethan Nowak. )

Logic is often said to provide norms for thought or reasoning. Indeed, this idea is central to the way in which logic has traditionally been defined as a discipline, and without it, it is not clear how we would distinguish logic from the disciplines that crowd it on all sides: psychology, metaphysics, mathematics, and semantics. But it turns out to be surprisingly hard to say how facts about the validity of inferences relate to norms for reasoning, and some philosophers have concluded that the whole idea is confused. In this talk I will survey a space of possible “bridge principles” connecting logical facts with norms for reasoning. After discussing some considerations relevant to choosing between these bridge principles, I will defend two of them. I will then consider the implications of various choices of bridge principle for the long-standing debates about the roles of relevance, necessity, and formality in our notion of logical consequence. The methodological aim of the talk is to provide an alternative to the usual brute appeals to our “intuitions” about logical consequence in these fundamental debates.

-

John MacFarlane, “Vagueness as Indecision”, Aristotelian Society Supplementary Volume 90 (2016), 255-283. [preprint] [published version]

This paper motivates and explores an expressivist theory of vagueness, modeled on Allan Gibbard’s (2003) normative expressivism. It shows how Chris Kennedy’s (2007) semantics for gradable adjectives can be adjusted to fit into a theory on Gibbardian lines, where assertions constrain not just possible worlds but plans for action. Vagueness, on this account, is literally indecision about where to draw lines. It is argued that the distinctive phenomena of vagueness, such as the intuition of tolerance, can be explained in terms of practical constraints on plans, and that the expressivist view captures what is right about several contending theories of vagueness.

-

John MacFarlane, “Précis, Assessment Sensitivity: Relative Truth and Its Applications”, Philosophy and Phenomenological Research 92 (2016), 168–170. [preprint] [published version]

A short summary of Assessment Sensitivity: Relative Truth and Its Applications.

-

John MacFarlane, “Replies to Raffman, Stanley, and Wright”, Philosophy and Phenomenological Research 92 (2016), 197–202. [preprint] [published version]

Replies to commentaries on Assessment Sensitivity: Relative Truth and Its Applications by Diana Raffman, Jason Stanley, and Crispin Wright.

-

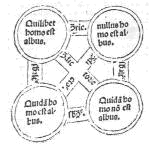

John MacFarlane, “Abelard’s Argument for Formality”, in Formal Approaches and Natural Language in Medieval Logic, ed. Laurent Cesalli and Alain de Libera and Frédéric Goubier (Barcelona, Roma: Brepols, 2015), 41–57. [preprint] [published version]

Fourteenth-century logicians define formal consequences as consequences that remain valid under uniform substitutions of categorematic terms, but they say little about the significance of this distinction. Why does it matter whether a consequence is formal or material? One possible answer is that, whereas the validity of a material consequence depends on both its structure and “the nature of things,” the validity of a formal consequence depends on its structure alone. But this claim does not follow from the definition of formal consequence by itself, and the fourteenth-century logicians do not give an argument for it. For that we must turn to Abelard, who argues explicitly that valid syllogisms take their validity from their construction alone, and not from “the nature of things.” I look at Abelard’s argument in its historical context. If my reconstruction is correct, the argument, and hence also the significance of the distinction between formal and material consequence, depends on a conception of “the nature of things” that we can no longer accept. This should give pause to contemporary thinkers who look to medieval notions of formal consequence as antecedents of their own.

-

John MacFarlane, “Review of Huw Price, Expressivism, Pragmatism, and Representationalism”, Notre Dame Philosophical Reviews 2014.02.09 (2014). [published version]

-

John MacFarlane, “Relativism”, in The Routledge Companion to the Philosophy of Language, ed. Delia Graff Fara and Gillian Russell (New York: Routledge, 2012), 132-142. [preprint]

A brief introduction to relativism in semantics, touching on motivations, technical issues, and philosophical issues.

-

John MacFarlane, “Richard on Truth and Commitment”, Philosophical Studies 106 (2012), 445-453. [preprint] [published version]

My contribution to a book symposium on Mark Richard’s book When Truth Gives Out.

-

John MacFarlane, “Simplicity Made Difficult”, Philosophical Studies 156 (2011), 441–448. [published version]

My contribution to a book symposium on Herman Cappelen and John Hawthorne’s book Relativism and Monadic Truth.

-

John MacFarlane, “What Is Assertion?”, in Assertion, ed. Jessica Brown and Herman Cappelen (Oxford: Oxford University Press, 2011), 79-96. [preprint]

What is it to make an assertion? The literature contains four broad categories of answers: (1) To assert is to express an attitude. (2) To assert is to make a move defined by its constitutive rules. (3) To assert is to propose to add information to the conversational common ground. (4) To assert is to undertake a certain sort of commitment. I articulate these views and discuss the motivations and advantages of each. My aim here is exploratory, not polemical: I want to see, among other things, how each view might account for the phenomena that motivate its competitors.

-

John MacFarlane, “Relativism and Knowledge Attributions”, in Routledge Companion to Epistemology, ed. Sven Bernecker and Duncan Pritchard (London: Routledge, 2011), 536-544. [preprint]

A handbook-style explanation of “relativist” semantics for knowledge attributions and its motivation.

-

John MacFarlane, “Epistemic Modals Are Assessment-Sensitive”, in Epistemic Modality, ed. Brian Weatherson and Andy Egan (Oxford: Oxford University Press, 2011), 144-178. [preprint]

This is my latest effort to tell the story sketched in my 2003 manuscript “Epistemic Modalities and Relative Truth.”

-

Niko Kolodny and John MacFarlane, “Ifs and Oughts”, Journal of Philosophy 107 (2010), 115-143. [preprint] [published version]

We consider a paradox involving indicative conditionals (“ifs”) and deontic modals (“oughts”). After considering and rejecting several standard options for resolving the paradox—including rejecting various premises, positing an ambiguity or hidden contextual sensitivity, and positing a non-obvious logical form—we offer a semantics for deontic modals and indicative conditionals that resolves the paradox by making modus ponens invalid. We argue that this is a result to be welcomed on independent grounds, and we show that rejecting the general validity of modus ponens is compatible with vindicating most ordinary uses of modus ponens in reasoning.

-

John MacFarlane, “Pragmatism and Inferentialism”, in Reading Brandom: On Making It Explicit, ed. Bernhard Weiss and Jeremy Wanderer (London: Routledge, 2010), 81-95. [preprint]

I discuss the connections between Brandom’s pragmatism and his inferentialism. I argue that pragmatism, as Brandom initially describes it – the view that “semantics must answer to pragmatics” – does not favor an inferentialist approach to semantics over a truth-conditional one. I then consider whether inferentialism might be motivated by a stronger kind of pragmatism, one that requires semantic concepts to be definable in terms of independently intelligible pragmatic concepts. Although this more stringent requirement does exclude truth-conditional approaches to semantics, it is not clear that Brandom’s own approach meets it.

-

John MacFarlane, “Fuzzy Epistemicism”, in Cuts and Clouds: Vagueness, Its Nature, and Its Logic, ed. Richard Dietz and Sebastiano Moruzzi (Oxford: Oxford University Press, 2010), 438-463. [preprint]

It is taken for granted in much of the literature on vagueness that semantic and epistemic approaches to vagueness are fundamentally at odds. If we can analyze borderline cases and the sorites paradox in terms of degrees of truth, then we don’t need an epistemic explanation. Conversely, if an epistemic explanation suffices, then there is no reason to depart from the familiar simplicity of classical bivalent semantics. I question this assumption, showing that there is an intelligible motivation for adopting a many-valued semantics even if one accepts a form of epistemicism. The resulting hybrid view has advantages over both classical epistemicism and traditional many-valued approaches.

-

John MacFarlane, “Double Vision: Two Questions About the Neo-Fregean Program”, Synthese 170 (2009), 443-456. [preprint] [published version]

Much of The Reason’s Proper Study is devoted to defending the claim that simply by stipulating an abstraction principle for the “number-of” functor, we can simultaneously fix a meaning for this functor and acquire epistemic entitlement to the stipulated principle. In this paper, I argue that the semantic and epistemological principles Wright and Hale offer in defense of this claim may be too strong for their purposes. For if these principles are correct, it is hard to see why they do not justify platonist strategies that are not in any way “neo-Fregean,” e.g. strategies that treat “the number of Fs” as a Russellian definite description rather than a singular term, or employ axioms that do not have the form of abstraction principles.

-

John MacFarlane, “Nonindexical Contextualism”, Synthese 166 (2009), 231-250.

Reprinted in What is Said and What is Not, ed. Carlo Penco and Filippo Domaneschi (Stanford: CSLI, 2013), 243-263. [preprint] [published version]Philosophers on all sides of the contextualism debates have had an overly narrow conception of what semantic context sensitivity could be. They have conflated context sensitivity (dependence of truth or extension on features of context) with indexicality (dependence of content on features of context). As a result of this conflation, proponents of contextualism have taken arguments that establish only context sensitivity to establish indexicality, while opponents of contextualism have taken arguments against indexicality to be arguments against context sensitivity. Once these concepts are carefully pulled apart, it becomes clear that there is conceptual space in semantic theory for nonindexical forms of contextualism that have many advantages over the usual indexical forms.

-

John MacFarlane, “Brandom’s Demarcation of Logic”, Philosophical Topics 36 (2008), 55-62. [preprint]

This is a lightly edited version of my comments on Brandom’s Lecture 2, as delivered in Prague at the “Prague Locke Lectures” in April, 2007. I try to say why Brandom’s proposed demarcation is significant, by placing it in a broader context of demarcation proposals from Kant to the twentieth century. I then raise some questions about the basic ingredients of Brandom’s demarcation—the notions of PP-sufficiency and VP-sufficiency—and question whether the vocabulary of conditionals, Brandom’s paradigm for logical vocabulary, can be universal-LX.

-

John MacFarlane, “Boghossian, Bellarmine, and Bayes”, Philosophical Studies 141 (2008), 391-98. [preprint] [published version]

My contribution to a book symposium on Paul Boghossian’s Fear of Knowledge.

-

John MacFarlane, “Truth in the Garden of Forking Paths”, in Relative Truth, ed. Max Kölbel and Manuel García-Carpintero (Oxford: Oxford University Press, 2008), 81-102. [preprint]

Many philosophers have supposed that if possible worlds overlap and branch (as opposed to having qualitatively identical pasts and then “diverging”), our common sense talk about the future is deeply misguided. Some of them plump for common sense, others for branching. In this paper, I argue that the dilemma is a false one. It is possible to develop a semantics for tense and the historical modalities that vindicates common sense talk about the future and historical possibility, even if there is branching. However, this can only be done in a semantic framework that relativizes truth to contexts of assessment. (Note: The core ideas come from my earlier paper “Future Contingents and Relative Truth,” but I now believe the argument of that paper to be inadequate. Much of this paper is devoted to explaining why, and to developing a more robust argument for the same conclusion.)

-

John MacFarlane, “Semantic Minimalism and Nonindexical Contextualism”, in Context-Sensitivity and Semantic Minimalism: New Essays on Semantics and Pragmatics, ed. G. Preyer and G. Peter (Oxford: Oxford University Press, 2007), 240-50. [preprint]

According to Semantic Minimalism, every use of “Chiara is tall” (fixing the girl and the time) semantically expresses the same proposition, the proposition that Chiara is (just plain) tall. Given standard assumptions, this proposition ought to have an intension (a function from possible worlds to truth values). However, speakers tend to reject questions that presuppose that it does. I suggest that semantic minimalists might address this problem by adopting a form of “nonindexical contextualism,” according to which the proposition invariantly expressed by “Chiara is tall” does not have a context-invariant intension. Nonindexical contextualism provides an elegant explanation of what is wrong with “context-shifting arguments” and can be seen as a synthesis of the (partial) insights of semantic minimalists and radical contextualists.

-

John MacFarlane, “The Logic of Confusion”, Philosophy and Phenomenological Research 74 (2007), 700-708. [preprint] [published version]

In Confusion: A Study in the Theory of Knowledge, Joseph Camp argues that the reasoning of a person who has confused two objects in her thought and talk ought to be appraised using a four-valued relevance logic. I discuss two key moves in Camp’s argument: the assumption that charity to the reasoner requires recognition of her arguments as valid, and the argument that validity for a truth-valueless discourse should not be defined in terms of truth preservation. I then question whether Camp’s four-valued semantics satisfies his own desiderata for a logic of confusion.

-

John MacFarlane, “Relativism and Disagreement”, Philosophical Studies 132 (2007), 17-31. [preprint]

In arguing about whether some food is “delicious,” whether some joke is “funny,” or whether some outcome is “likely,” we take ourselves to be disagreeing with each other. This disagreement goes missing on contextualist accounts of these adjectives, which take them to express relations to the speaker. Relativist accounts claim to do better, securing genuine disagreement in discourse about what is “delicious,” “funny,” or “likely,” while respecting the “subjectivity” of such discourse. But do relativist accounts really make sense of disagreement? If the proposition that apples are delicious can be true from one perspective but false from another, why should we say that one who accepts this proposition while occupying the first perspective disagrees with one who rejects it while occupying the second perspective? Answering this question requires thinking carefully about what it is to disagree with someone.

-

John MacFarlane, “The Things We (Sorta Kinda) Believe”, Philosophy and Phenomenological Research 73 (2006), 218-224. [preprint] [published version] (See also Schiffer’s reply and my response to Schiffer’s reply. )

In Chapter 5 of The Things We Mean, Stephen Schiffer rejects semantic approaches to vagueness, proposing instead a “psychological” theory that analyzes our attitudes toward vague propositions as a mixture of standard (uncertainty-related) partial belief (SPB) and a special kind of vagueness-related partial belief (VPB). I show that Schiffer’s theory of SPBs and VPBs is inconsistent, and I argue that his reasons for rejecting semantic approaches (particularly degree theories) are inconclusive.

-

John MacFarlane, “Logical Constants”, Stanford Encyclopedia of Philosophy (2005, substantive revision 2009, substantive revision 2015). [published version]

A critical survey of approaches to the problem of demarcating the “logical constants.”

-

John MacFarlane, “The Assessment Sensitivity of Knowledge Attributions”, Oxford Studies in Epistemology 1 (2005), 197-233.

Reprinted in Epistemology: An Anthology (second edition), ed. Ernest Sosa and Jaegwon Kim and Jeremy Fantl and Matthew McGrath (Oxford: Blackwell, 2008). [preprint]Current debates about the semantics of knowledge-attributing sentences center on whether the epistemic standards relevant to the truth of such sentences vary with the context of use, the circumstances of evaluation, or neither. I argue that although the strict invariantists are right that the standards do not vary in either of these ways, the contextualists are also right to think that there is some kind of contextual variation in the standards. On the semantics I propose, the relevant epistemic standard varies not with the context of use, but with the context of assessment: the concrete context in which an utterance is being assessed for truth or falsity. The price of this reconciliation of contextualism and invariantism is that I must explain what it means to talk of truth relative to a context of assessment. I discharge this obligation by describing the role assessment-relative truth plays in a normative account of assertion.

-

John MacFarlane, “Making Sense of Relative Truth”, Proceedings of the Aristotelian Society 105 (2005), 321-39.

Reprinted in Relativism: A Compendium, ed. Michael Krausz (New York: Columbia University Press, 2010).

Reprinted as “Dare un senso alla verità relativa”, Tropos 3 (2010). [preprint]The goal of this paper is to make sense of relativism about truth. There are two key ideas. (1) To be a relativist about truth is to allow that a sentence or proposition might be assessment-sensitive; that is, its truth value might vary with the context of assessment as well as the context of use. (2) Making sense of relativism is a matter of understanding what it would be to commit oneself to the truth of an assessment-sensitive sentence or proposition.

-

John MacFarlane, “Knowledge Laundering: Testimony and Sensitive Invariantism”, Analysis 65 (2005), 132-8. [published version]

According to “sensitive invariantism,” the word “know” expresses the same relation in every context of use, but what it takes to stand in this relation to a proposition can vary with the subject’s circumstances. Sensitive invariantism looks like an attractive reconciliation of invariantism and contextualism. However, it is incompatible with a widely-held view about the way knowledge is transmitted through testimony. If both views were true, someone whose evidence for p fell short of what was required for knowledge in her circumstances could come to know that p simply by feeding her evidence to someone in less demanding circumstances and then accepting his testimony.

-

John MacFarlane, “McDowell’s Kantianism”, Theoria 70 (2004), 250-265. [preprint]

In recent work, John McDowell has urged that we resurrect the Kantian thesis that “concepts without intuitions are empty.” I distinguish two forms of the thesis: a strong form that applies to all concepts and a weak form that is limited to empirical concepts. Because he rejects Kant’s philosophy of mathematics but is not willing to claim that all content is empirical, McDowell can accept only the weaker form of the thesis. But this position is unstable. Insisting that empirical concepts can have rational relation to their objects only if they are actualizable in passive experience makes it mysterious why the deployment of pure mathematical concepts is not a mere “game with symbols.” Historically, it was anxiety about the possibility of mathematical content, and not worries about the “myth of the given,” that spurred the retreat from Kantian views of empirical content. McDowell owes us some more therapy on this score.

-

John MacFarlane, “Review of Myles Burnyeat, A Map of Metaphysics Zeta”, The Philosophical Review 112 (2003), 97-99. [published version]

-

John MacFarlane, “Future Contingents and Relative Truth”, The Philosophical Quarterly 53 (2003), 321-36.

Reprinted in Freedom, Fatalism, and Foreknowledge, ed. J. M. Fischer and Patrick Todd (Oxford: Oxford University Press, 2014). [published version] (Winner of The Philosophical Quarterly Essay Prize 2002.)If it is not now determined whether there will be a sea battle tomorrow, can an assertion that there will be one be true? The problem has persisted because there are compelling arguments on both sides. If there are objectively possible futures witnessing the truth of the prediction and others witnessing its falsity, symmetry considerations seem to forbid counting it either true or false. Yet if we think about how we will assess the prediction tomorrow, when a sea battle is raging (or not), it seems we must assign the utterance a definite truth value. I argue that both arguments must be given their due, and that this requires relativizing utterance truth to a context of assessment. I show how this relativization can be handled in a rigorous formal semantics, and I argue that we can make coherent sense of assertion without assuming that utterances have their truth values absolutely.

-

John MacFarlane, “Review of Colin McGinn, Logical Properties: Identity, Existence, Predication, Necessity, Truth”, The Philosophical Review 111 (2002), 534-7. [published version]

-

John MacFarlane, “Frege, Kant, and the Logic in Logicism”, The Philosophical Review 111 (2002), 25-65.

Reprinted in Gottlob Frege: Critical Assessments of Leading Philosophers, vol. 1, ed. Michael Beany and Erich Reck (New York: Routledge, 2005). [published version]In arguing that arithmetic is reducible to logic and definitions, Frege presents himself as contradicting a thesis of Kant’s. But Kant holds that (general) logic must be “formal,” and Frege’s logical system is not formal in Kant’s sense. Is their disagreement partly a verbal one, then, about the meaning of “logic”? Appealing to textual and historical evidence as well as philosophical reconstruction, I argue (a) that Kant and Frege mean the same thing by “logic,” and (b) that Kant’s claim that logic is formal is a substantive thesis of his critical philosophy, not part of a definition.

-

John MacFarlane, “Review of Stephen Neale, Facing Facts”, Notre Dame Philosophical Reviews 2002.08.15 (2002). [published version]

-

John MacFarlane, “Review of Michael Potter, Reason’s Nearest Kin: Philosophies of Arithmetic from Kant to Carnap”, Journal of the History of Philosophy 39 (2001), 454-6. [published version]

-

John MacFarlane, “Aristotle’s Definition of Anagnôrisis”, American Journal of Philology 121 (2000), 367-383. [published version]

I argue for a new construal of Aristotle’s definition of anagnorisis (recognition) in Poetics 11. Virtually all translators and interpreters of the definition have understood the phrase ton pros eutuchian e dustuchian horismenon as a subjective genitive characterizing the persons involved in the recognition. I argue that it should instead be taken as a partitive genitive characterizing the genus of changes (metabolon) of which recognitions are a species. In addition to being preferable on philogical grounds, the construal I recommend helps illuminate the relation between recognition and reversal (peripeteia) and makes sense of Aristotle’s views about the relative values of various kinds of recognition.